【PaddleHub模型贡献】一行代码实现水表的数字表盘分割

本文介绍将水表数字表盘分割模型贡献到PaddleHub的方法。先安装必要库,复现模型:准备数据集,配置GPU,定义图像预处理流程和数据集,用DeepLabv3p训练模型并导出。接着转换模型为PaddleHub模型,补充代码实现旋转剪裁等功能,最后测试安装与调用,实现水表数字表盘分割。

【PaddleHub模型贡献】一行代码实现水表的数字表盘分割

一、安装必要的库

In [3]!pip install paddlex -i https://mirror.baidu.com/pypi/simple!pip install --upgrade paddlepaddle-gpu -i https://pypi.tuna.tsinghua.edu.cn/simple!pip install --upgrade paddlehub==2.0.1 -i https://pypi.tuna.tsinghua.edu.cn/simple登录后复制

二、模型训练

项目作者使用PaddleX做的语义分割,因为作者没有直接公开训练好的模型,所以这里我们先按照作者的思路复现模型。

免费影视、动漫、音乐、游戏、小说资源长期稳定更新! 👉 点此立即查看 👈

1.准备表盘数据集

In [ ]!unzip -oq /home/aistudio/data/data73852/water.zip登录后复制

2. 模型训练

2.1 配置GPU

In [ ]# 设置使用0号GPU卡(如无GPU,执行此代码后仍然会使用CPU训练模型)import matplotlibmatplotlib.use('Agg') import osos.environ['CUDA_VISIBLE_DEVICES'] = '0'import paddlex as pdx登录后复制 2.2 定义图像预处理流程transforms

定义数据处理流程,其中训练和测试需分别定义,训练过程包括了部分测试过程中不需要的数据增强操作,如在本示例中,训练过程使用了RandomHorizontalFlip和RandomPaddingCrop两种数据增强方式,更多图像预处理流程transforms的使用可参见paddlex.seg.transforms。

In [ ]from paddlex.seg import transformstrain_transforms = transforms.Compose([ transforms.RandomHorizontalFlip(), transforms.Resize(target_size=512), transforms.RandomPaddingCrop(crop_size=500), transforms.Normalize()])eval_transforms = transforms.Compose([ transforms.Resize(512), transforms.Normalize()])登录后复制

2.3 定义数据集Dataset

实例分割使用SegDataset格式的数据集,因此采用pdx.datasets.SegDataset来加载数据集,该接口的介绍可参见文档pdx.datasets.SegDataset。

In [ ]train_dataset = pdx.datasets.SegDataset( data_dir='water', file_list='water/train.txt', label_list='water/class_names.txt', transforms=train_transforms, shuffle=True)eval_dataset = pdx.datasets.SegDataset( data_dir='water', file_list='water/val.txt', label_list='water/class_names.txt', transforms=eval_transforms)登录后复制

2024-03-11 14:54:48 [INFO]150 samples in file water/train.txt2024-03-11 14:54:48 [INFO]11 samples in file water/val.txt登录后复制

2.4 模型开始训练

使用本数据集在P40上训练,如有GPU,模型的训练过程预估为13分钟左右;如无GPU,则预估为5小时左右。更多训练模型的参数可参见文档paddlex.seg.DeepLabv3p。模型训练过程每间隔save_interval_epochs轮会保存一次模型在save_dir目录下,同时在保存的过程中也会在验证数据集上计算相关指标,具体相关日志参见文档。

In [ ]num_classes = len(train_dataset.labels)model = pdx.seg.DeepLabv3p(num_classes=num_classes)model.train( num_epochs=40, train_dataset=train_dataset, train_batch_size=4, eval_dataset=eval_dataset, learning_rate=0.01, save_interval_epochs=1, # pretrain_weights='output/deeplab4/best_model', save_dir='output/water')登录后复制

最后一轮的输出如下所示:

2024-03-11 15:02:56 [INFO][TRAIN] Epoch=40/40, Step=1/37, loss=0.010831, lr=0.000362, time_each_step=0.18s, eta=0:0:102024-03-11 15:02:56 [INFO][TRAIN] Epoch=40/40, Step=3/37, loss=0.010944, lr=0.000344, time_each_step=0.2s, eta=0:0:102024-03-11 15:02:57 [INFO][TRAIN] Epoch=40/40, Step=5/37, loss=0.009099, lr=0.000326, time_each_step=0.22s, eta=0:0:102024-03-11 15:02:57 [INFO][TRAIN] Epoch=40/40, Step=7/37, loss=0.011186, lr=0.000308, time_each_step=0.24s, eta=0:0:102024-03-11 15:02:57 [INFO][TRAIN] Epoch=40/40, Step=9/37, loss=0.008269, lr=0.00029, time_each_step=0.25s, eta=0:0:102024-03-11 15:02:58 [INFO][TRAIN] Epoch=40/40, Step=11/37, loss=0.011792, lr=0.000272, time_each_step=0.25s, eta=0:0:102024-03-11 15:02:58 [INFO][TRAIN] Epoch=40/40, Step=13/37, loss=0.010976, lr=0.000254, time_each_step=0.26s, eta=0:0:92024-03-11 15:02:58 [INFO][TRAIN] Epoch=40/40, Step=15/37, loss=0.01399, lr=0.000236, time_each_step=0.26s, eta=0:0:92024-03-11 15:02:58 [INFO][TRAIN] Epoch=40/40, Step=17/37, loss=0.009998, lr=0.000217, time_each_step=0.26s, eta=0:0:82024-03-11 15:02:58 [INFO][TRAIN] Epoch=40/40, Step=19/37, loss=0.012266, lr=0.000198, time_each_step=0.26s, eta=0:0:82024-03-11 15:02:58 [INFO][TRAIN] Epoch=40/40, Step=21/37, loss=0.011713, lr=0.00018, time_each_step=0.13s, eta=0:0:52024-03-11 15:02:58 [INFO][TRAIN] Epoch=40/40, Step=23/37, loss=0.010291, lr=0.00016, time_each_step=0.11s, eta=0:0:52024-03-11 15:02:58 [INFO][TRAIN] Epoch=40/40, Step=25/37, loss=0.010211, lr=0.000141, time_each_step=0.09s, eta=0:0:42024-03-11 15:02:59 [INFO][TRAIN] Epoch=40/40, Step=27/37, loss=0.02097, lr=0.000121, time_each_step=0.08s, eta=0:0:42024-03-11 15:02:59 [INFO][TRAIN] Epoch=40/40, Step=29/37, loss=0.008198, lr=0.000101, time_each_step=0.07s, eta=0:0:32024-03-11 15:02:59 [INFO][TRAIN] Epoch=40/40, Step=31/37, loss=0.010346, lr=8.1e-05, time_each_step=0.06s, eta=0:0:32024-03-11 15:02:59 [INFO][TRAIN] Epoch=40/40, Step=33/37, loss=0.009331, lr=6e-05, time_each_step=0.06s, eta=0:0:32024-03-11 15:02:59 [INFO][TRAIN] Epoch=40/40, Step=35/37, loss=0.01259, lr=3.8e-05, time_each_step=0.06s, eta=0:0:32024-03-11 15:02:59 [INFO][TRAIN] Epoch=40/40, Step=37/37, loss=0.013072, lr=1.4e-05, time_each_step=0.06s, eta=0:0:32024-03-11 15:02:59 [INFO][TRAIN] Epoch 40 finished, loss=0.011522, lr=0.000195 .2024-03-11 15:02:59 [INFO]Start to evaluating(total_samples=11, total_steps=3)...100%|██████████| 3/3 [00:02<00:00, 1.00it/s]2024-03-11 15:03:02 [INFO][EVAL] Finished, Epoch=40, miou=0.814756, category_iou=[0.99168644 0.63782582], oacc=0.991806, category_acc=[0.99431391 0.84710874], kappa=0.774722, category_F1-score=[0.99582587 0.77886893] .2024-03-11 15:03:03 [INFO]Model saved in output/water/epoch_40.2024-03-11 15:03:03 [INFO]Current evaluated best model in eval_dataset is epoch_35, miou=0.8284633456567256登录后复制

3.模型导出

模型训练时会自动保存模型参数,我们需要把训练模型导出成可预测模型。

In [ ]!paddlex --export_inference --model_dir=output/water/best_model --save_dir=./inference_model登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/setuptools/depends.py:2: DeprecationWarning: the imp module is deprecated in favour of importlib; see the module's documentation for alternative uses import impW0311 15:49:28.613981 782 device_context.cc:362] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 11.0, Runtime API Version: 10.1W0311 15:49:28.618839 782 device_context.cc:372] device: 0, cuDNN Version: 7.6.2024-03-11 15:49:32 [INFO]Model[DeepLabv3p] loaded.2024-03-11 15:49:32 [INFO]Model for inference deploy saved in ./inference_model.登录后复制

三、封装Module

下面正式开始模型转换!

1.模型转换

PaddleX模型可以快速转换成PaddleHub模型,只需要用下面这一句命令即可:

In [ ]!hub convert --model_dir inference_model \ --module_name WatermeterSegmentation \ --module_version 1.0.0 \ --output_dir outputs登录后复制

转换成功后的模型保存在outputs文件夹下,我们解压一下:

In [ ]!gzip -dfq /home/aistudio/outputs/WatermeterSegmentation.tar.gz!tar -xf /home/aistudio/outputs/WatermeterSegmentation.tar登录后复制

2.补充代码

刚刚转换的模型其实已经是PaddleHub的Module了,但是原项目中,作者做了一些图片的裁剪等操作,把数字提取出来了,因此,我们需要把这部分代码补充进去。

完整的module.py文件内容如下:

from __future__ import absolute_importfrom __future__ import divisionimport osimport cv2import argparseimport base64import paddlex as pdxfrom math import *import time, math, reimport numpy as npimport paddlehub as hubfrom paddlehub.module.module import moduleinfo, runnable, servingdef base64_to_cv2(b64str): data = base64.b64decode(b64str.encode('utf8')) data = np.fromstring(data, np.uint8) data = cv2.imdecode(data, cv2.IMREAD_COLOR) return datadef cv2_to_base64(image): # return base64.b64encode(image) data = cv2.imencode('.webp', image)[1] return base64.b64encode(data.tostring()).decode('utf8')def read_images(paths): images = [] for path in paths: images.append(cv2.imread(path)) return images'''旋转图像并剪裁'''def rotate( img, # 图片 pt1, pt2, pt3, pt4, imgOutSrc): # print(pt1,pt2,pt3,pt4) withRect = math.sqrt((pt4[0] - pt1[0]) ** 2 + (pt4[1] - pt1[1]) ** 2) # 矩形框的宽度 heightRect = math.sqrt((pt1[0] - pt2[0]) ** 2 + (pt1[1] - pt2[1]) **2) # print("矩形的宽度",withRect, "矩形的高度", heightRect) angle = acos((pt4[0] - pt1[0]) / withRect) * (180 / math.pi) # 矩形框旋转角度 # print("矩形框旋转角度", angle) if withRect > heightRect: if pt4[1]>pt1[1]: # print("顺时针旋转") pass else: # print("逆时针旋转") angle=-angle else: # print("逆时针旋转") angle=90 - angle height = img.shape[0] # 原始图像高度 width = img.shape[1] # 原始图像宽度 rotateMat = cv2.getRotationMatrix2D((width / 2, height / 2), angle, 1) # 按angle角度旋转图像 heightNew = int(width * fabs(sin(radians(angle))) + height * fabs(cos(radians(angle)))) widthNew = int(height * fabs(sin(radians(angle))) + width * fabs(cos(radians(angle)))) rotateMat[0, 2] += (widthNew - width) / 2 rotateMat[1, 2] += (heightNew - height) / 2 imgRotation = cv2.warpAffine(img, rotateMat, (widthNew, heightNew), borderValue=(255, 255, 255)) # cv2.imwrite("imgRotation.webp", imgRotation) # 旋转后图像的四点坐标 [[pt1[0]], [pt1[1]]] = np.dot(rotateMat, np.array([[pt1[0]], [pt1[1]], [1]])) [[pt3[0]], [pt3[1]]] = np.dot(rotateMat, np.array([[pt3[0]], [pt3[1]], [1]])) [[pt2[0]], [pt2[1]]] = np.dot(rotateMat, np.array([[pt2[0]], [pt2[1]], [1]])) [[pt4[0]], [pt4[1]]] = np.dot(rotateMat, np.array([[pt4[0]], [pt4[1]], [1]])) # 处理反转的情况 if pt2[1]>pt4[1]: pt2[1],pt4[1]=pt4[1],pt2[1] if pt1[0]>pt3[0]: pt1[0],pt3[0]=pt3[0],pt1[0] imgOut = imgRotation[int(pt2[1]):int(pt4[1]), int(pt1[0]):int(pt3[0])] cv2.imwrite(imgOutSrc, imgOut) # 裁减得到的旋转矩形框@moduleinfo( name='WatermeterSegmentation', type='CV/semantic_segmentatio', author='郑博培、彭兆帅', author_email='2733821739@qq.com', summary='Digital dial segmentation of water meter', version='1.0.0')class MODULE(hub.Module): def _initialize(self, **kwargs): self.default_pretrained_model_path = os.path.join( self.directory, 'assets') self.model = pdx.deploy.Predictor(self.default_pretrained_model_path, **kwargs) def predict(self, images=None, paths=None, data=None, batch_size=1, use_gpu=False, **kwargs): all_data = images if images is not None else read_images(paths) total_num = len(all_data) loop_num = int(np.ceil(total_num / batch_size)) res = [] for iter_id in range(loop_num): batch_data = list() handle_id = iter_id * batch_size for image_id in range(batch_size): try: batch_data.append(all_data[handle_id + image_id]) except IndexError: break out = self.model.batch_predict(batch_data, **kwargs) res.extend(out) return res def cutPic(self, picUrl): # seg = hub.Module(name='WatermeterSegmentation') image_name = picUrl im = cv2.imread(image_name) result = self.predict(images=[im]) # 将多边形polygon转矩形 contours, hier = cv2.findContours(result[0]['label_map'], cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE) print(type(contours[0])) n = 0 m = 0 for index,contour in enumerate(contours): if len(contour) > n: n = len(contour) m = index image = cv2.imread(image_name) # 获取最小的矩形 rect = cv2.minAreaRect(contours[m]) box = np.int0(cv2.boxPoints(rect)) # 获取到矩形的四个点 tmp = cv2.drawContours(image, [box], 0, (0, 0, 255), 3) imgOutSrc = 'result.webp' rotate(image, box[0], box[1], box[2], box[3], imgOutSrc) res = [] res.append(imgOutSrc) return res @serving def serving_method(self, images, **kwargs): """ Run as a service. """ images_decode = [base64_to_cv2(image) for image in images] results = self.predict(images_decode, **kwargs) res = [] for result in results: if isinstance(result, dict): # result_new = dict() for key, value in result.items(): if isinstance(value, np.ndarray): result[key] = cv2_to_base64(value) elif isinstance(value, np.generic): result[key] = np.asscalar(value) elif isinstance(result, list): for index in range(len(result)): for key, value in result[index].items(): if isinstance(value, np.ndarray): result[index][key] = cv2_to_base64(value) elif isinstance(value, np.generic): result[index][key] = np.asscalar(value) else: raise RuntimeError('The result cannot be used in serving.') res.append(result) return res @runnable def run_cmd(self, argvs): """ Run as a command. """ self.parser = argparse.ArgumentParser( description="Run the {} module.".format(self.name), prog='hub run {}'.format(self.name), usage='%(prog)s', add_help=True) self.arg_input_group = self.parser.add_argument_group( title="Input options", description="Input data. Required") self.arg_config_group = self.parser.add_argument_group( title="Config options", description= "Run configuration for controlling module behavior, not required.") self.add_module_config_arg() self.add_module_input_arg() args = self.parser.parse_args(argvs) results = self.predict( paths=[args.input_path], use_gpu=args.use_gpu) return results def add_module_config_arg(self): """ Add the command config options. """ self.arg_config_group.add_argument( '--use_gpu', type=bool, default=False, help="whether use GPU or not") def add_module_input_arg(self): """ Add the command input options. """ self.arg_input_group.add_argument( '--input_path', type=str, help="path to image.")if __name__ == '__main__': module = MODULE(directory='./new_model') images = [cv2.imread('./cat.webp'), cv2.imread('./cat.webp'), cv2.imread('./cat.webp')] res = module.predict(images=images)登录后复制 3.模型测试

首先安装我们刚刚写好的Module:

In [ ]!hub install WatermeterSegmentation登录后复制

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/setuptools/depends.py:2: DeprecationWarning: the imp module is deprecated in favour of importlib; see the module's documentation for alternative uses import imp/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/__init__.py:107: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working from collections import MutableMapping/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/rcsetup.py:20: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working from collections import Iterable, Mapping/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/colors.py:53: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working from collections import Sized[2024-03-11 16:42:50,225] [ INFO] - Successfully uninstalled WatermeterSegmentation[2024-03-11 16:42:50,441] [ INFO] - Successfully installed WatermeterSegmentation-1.0.0登录后复制

模型调用:

In [4]import cv2import paddlehub as hubseg = hub.Module(name='WatermeterSegmentation')res = seg.cutPic(picUrl="water/images/val/20200521105032.webp")登录后复制

[2024-03-11 17:13:36,113] [ WARNING] - The _initialize method in HubModule will soon be deprecated, you can use the __init__() to handle the initialization of the object登录后复制

登录后复制

预测结果如下。

输入图片:

最终将截取的图片显示效果如下:

游乐网为非赢利性网站,所展示的游戏/软件/文章内容均来自于互联网或第三方用户上传分享,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系youleyoucom@outlook.com。

同类文章

同类文章

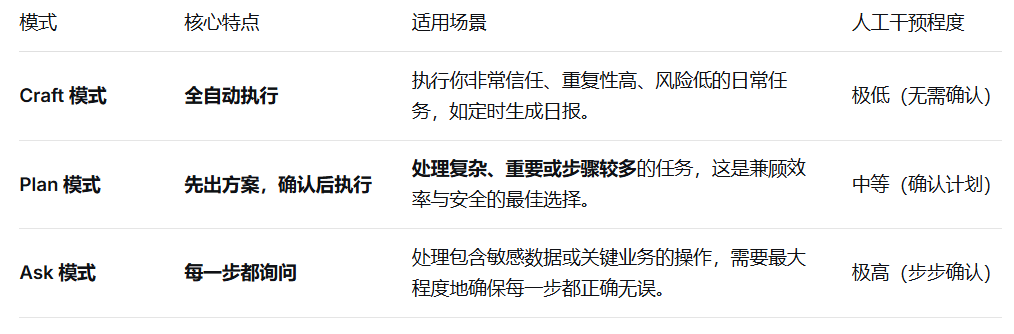

说一下WorkBuddy 的 Plan 模式

如何切换到 Plan 模式 想体验这种更可控的方式?操作很简单。在 WorkBuddy 主界面的右下角,你会看到一个“安全模式切换”的下拉菜单,从中选择“Plan”选项即可完成切换。 核心使用流程 光说概念可能有点抽象,咱们直接看个例子。假设你手头有个任务:“把桌面上‘项目报告’文件夹里所有Exce

滴滴出行开放打车 Skill,“龙虾”叫车全程不需要切换 App

滴滴出行全网首发语音打车Skill,一句话智能叫车全攻略 近日,滴滴出行正式上线了一项创新的语音交互功能:全面开放打车Skill。这意味着,用户只需通过语音指令,即可完成从叫车到行程追踪的全流程,真正实现“动口不动手”的便捷出行体验。 整个操作过程,包括目的地搜索、车型比价、下单确认、查看订单状态等

阿里千问 AI 眼镜接入蚂蚁 GPASS:语音解锁共享单车、停车缴费

当AI眼镜学会“跑腿”:语音解锁单车,无感支付停车费 近来,智能穿戴领域的一个新动向值得关注:阿里旗下的千问AI眼镜,正式接入了蚂蚁集团的GPASS平台。这可不是一次简单的功能叠加,它意味着,诸如共享单车骑行、停车缴费这一系列高频的“AI办事”功能,开始从手机屏幕转移到了你的眼前。 简单说,借助GP

Workbuddy注册额外积分

角色定位与核心任务目标 明确了基本定位后,我们直接切入核心:作为一名专业的文章优化师,我的核心职责在于,将那些带有明显AI生成特征的文本,深度重塑为拥有个人特色与行业洞见的优质内容。 换句话说,这项任务的关键在于实施一次“精准的换血手术”。你必须严格保证原文所有的事实依据、核心观点、逻辑框架,以及每

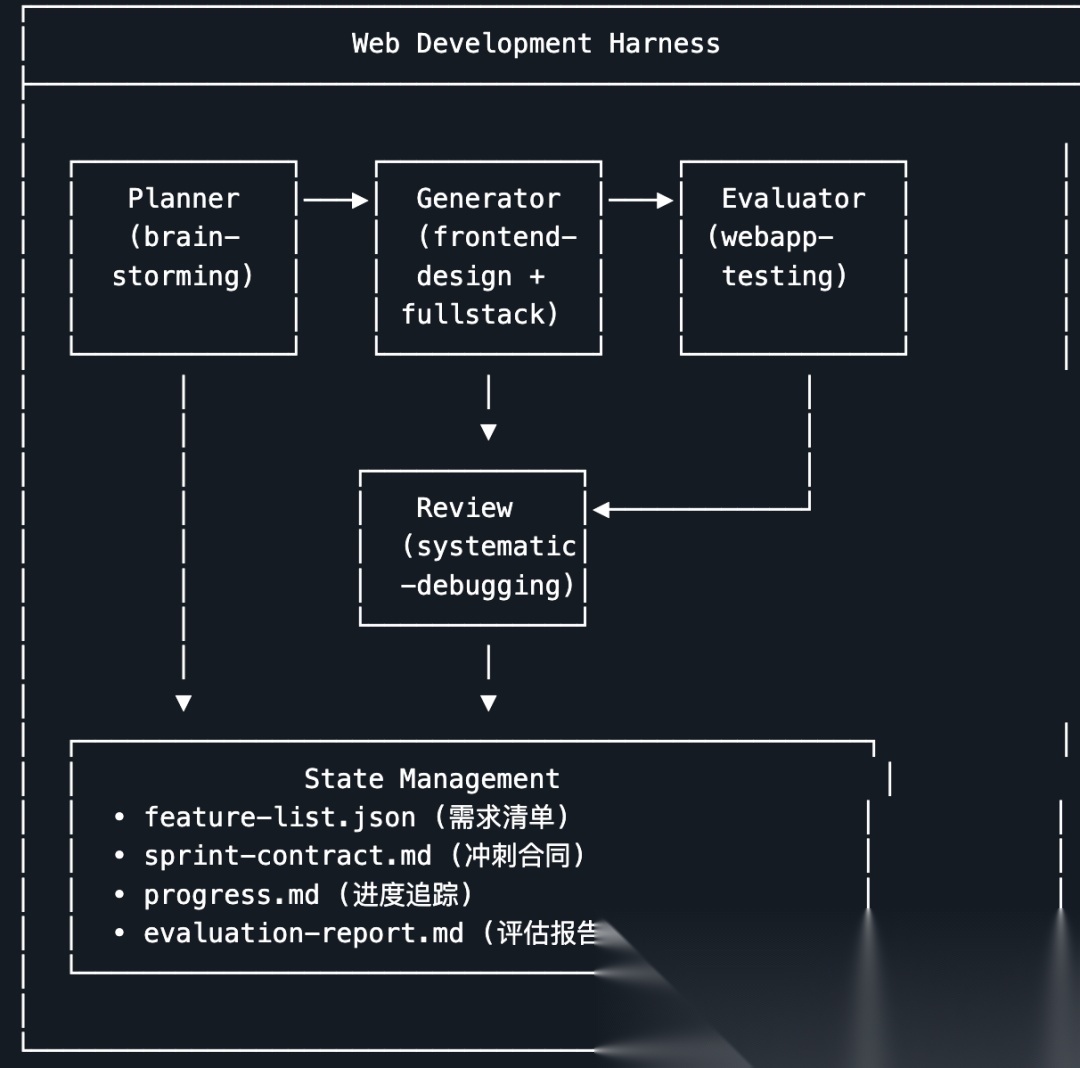

我把 Anthropic 的 Harness 工程思想做成了一个 Skill

用AI写代码,难在哪儿? 用AI生成代码本身并不难,真正的挑战在于让它稳定地交付一个真正可用的东西。这篇文章,我们就来聊聊Anthropic工程团队是如何破解这个难题的,以及我如何将这套方法论落地成了一个可以复用的实战工具。 用 AI 写代码有多难?不是写不出来难,是让它稳定交付可用的东西很难。这篇

- 日榜

- 周榜

- 月榜

1

1

2

2

3

3

4

4

5

5

6

6

7

7

8

8

9

9

10

10

相关攻略

相关攻略

2015-03-10 11:25

2015-03-10 11:25

2015-03-10 11:05

2015-03-10 11:05

2021-08-04 13:30

2021-08-04 13:30

2015-03-10 11:22

2015-03-10 11:22

2015-03-10 12:39

2015-03-10 12:39

2022-05-16 18:57

2022-05-16 18:57

2025-05-23 13:43

2025-05-23 13:43

2025-05-23 14:01

2025-05-23 14:01

热门教程

热门教程

- 游戏攻略

- 安卓教程

- 苹果教程

- 电脑教程